For those of you that have been in Pro AV for decades, confusion is not something new. Remember the analog sunset? Remember HDCP? Remember going over-IP? Fortunately, confusion is a phenomenon that disappears over time.

Being restricted from travelling and through my countless Zoom meetings, I have found a new confusion in our Pro AV industry: Dolby Vision. From equipment vendors to end-users, Dolby Vision creates confusion.

A Brief History of Dolby Vision

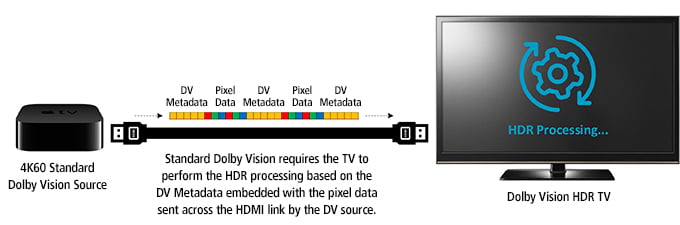

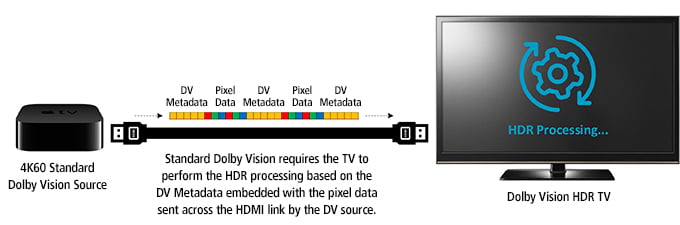

Let’s try to understand why with a bit of history. Back in 2014, Dolby Laboratories introduced the first Dolby Vision standard as a content mastering and delivery format similar to the HDR10 media profile. At that time, the main improvement over HDR10 was the capability to support dynamic metadata over existing HDMI links. Dolby Vision (DV) did not require a new HDMI standard as it was embedding the metadata into the video signal. Knowing that current versions of HDMI would not pass the Dolby Vision dynamic metadata, Dolby developed a way to carry this dynamic metadata across HDMI interfaces as far back as version 1.4b. It took some time for the HDMI specification to catch up, with version 2.0a supporting static metadata. In other words, a DV source was capable of encoding metadata in the pixel data values that the sink (TV) was able to extract in order to perform the video processing required based on this reconstructed metadata. Of course, this mechanism was pushing significant complexity and processing requirements in the sink device. Moreover, it was also destroying AV synchronization between different TVs, because different TVs will produce different latencies (video delays) to accomplish this computationally intensive DV task.

Fast Forward Years Later

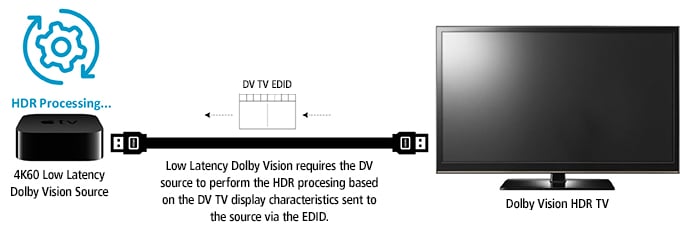

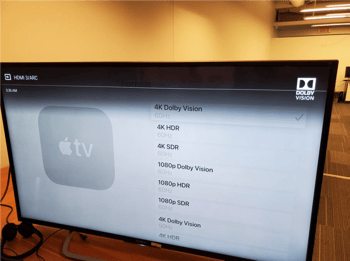

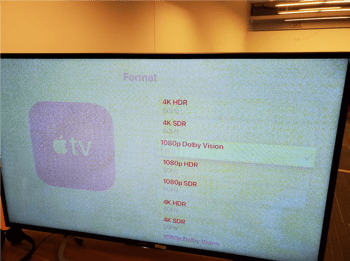

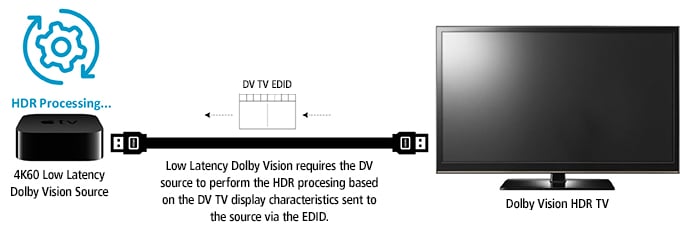

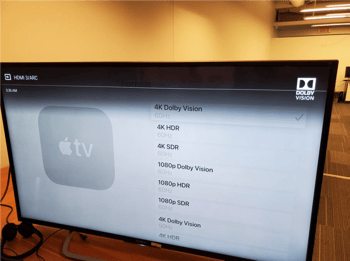

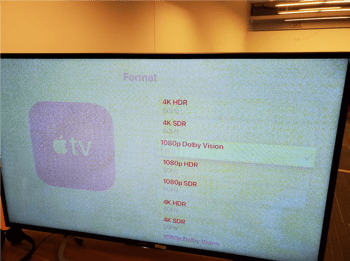

Dolby Laboratories specified (Dolby Vision HDMI transmission specification, version 2.9) a new DV mode of operation: Low Latency referred to as LLDV (Low Latency Dolby Vision), as opposed to STD (older standard mode of operation for Dolby Vision). LLDV is the evolution of DV. This mode is now mandatory for DV devices, whereas the STD mode is no longer required. Basically, with LLDV, the processing is done at the source, eliminating all problems associated with high latencies and also the transfer of dynamic or static metadata (metadata is not sent to the sink in LLDV). The LLDV mode is doing wonders for source devices, sink devices and Pro AV equipment.

LLDV is Much Better for Source Devices

In one word, latency. By shifting the computationally intensive task of processing metadata and tone mapping into the source rather than the sink device, the latency from source to display is much lower (hence its name, “Low Latency”.) The source device is also the place where complex algorithms are the most suited to take place. Microsoft never implemented the old STD DV, but with the newer LLDV mode, it announced that its Xbox One now supports LLDV (system update 1810). Future Xbox Series S and Series X will also fully embrace LLDV, even for the game rendering.

LLDV is Much Better for Sink Devices

As stated by Dolby itself (Dolby Vision HDMI transmission specification, version 2.9), removing the transfer of metadata to the sink and performing computations at the source greatly reduces sink requirements. It translates to greater sink support and interoperability. Sony officially announced two years ago that it will only support LLDV going forward, and not the STD mode. Even previous A1 OLED, X93E, X94E, and Z9D removed the older STD mode through firmware updates.

LLDV is Much Better for Pro AV Devices

LLDV is using “normal” encoding: native 12 bits 4:2:2 up to UHD resolution, D65 white point, the BT.2020 color matrix, and ETOF-compliant with SMPTE 2084. Compared to STD Dolby Vision, there is no more finicky data packing of metadata within the active picture pixel data or ICtCp color space. In other words, LLDV is much friendlier to distribute. Even a non-DV capable TV receiving such a LLDV signal will correctly display the LLDV signal. In the case of STD, image corruption was the result we were previously plagued by. Latency is another huge improvement of LLDV. AV synchronization between different TVs is now possible.

LLDV distributed to non-DV TV

LLDV distributed to non-DV TV

STD DV distributed to non-DV TV

STD DV distributed to non-DV TV

In conclusion, Dolby has clearly improved Dolby Vision with LLDV. Over the past two years, all appliances claiming support for DV are supporting LLDV. And of course, all Semtech Pro AV chipsets fully support LLDV, whether for software defined video over Ethernet (SDVoE™) IP-based video distribution using BlueRiver®, or point-to-point AV extension over both copper cables and fiber using AVXT.

We can now focus on other things causing confusion, such as the myth of compressing 4K under 1Gbps provides good quality video. I will leave that for another blog. For now, I welcome you to learn more about how the game-changing capabilities of the BlueRiver AV processor and AVXT AV extender ASICs can support your 4K and HDR vision at the links below.

Semtech®, the Semtech logo, and BlueRiver® are registered trademarks or service marks of Semtech Corporation or its affiliates. Other product or service names mentioned herein may be the trademarks of their respective owners.

LLDV distributed to non-DV TV

LLDV distributed to non-DV TV STD DV distributed to non-DV TV

STD DV distributed to non-DV TV